Research Speed Is Not Research Confidence

AI can make research work feel faster, but speed alone does not make a workflow trustworthy. A stronger academic process still needs two different safeguards: one for building a literature review from real papers, and one for checking whether the cit

A lot of researchers now know enough not to trust AI blindly.

But many of them still make a quieter mistake: they confuse speed with confidence.

They think that if a workflow feels fast, smooth, and organized, it must also be academically strong.

That is not true.

Fast output can still be built on weak paper selection.

Clean prose can still sit on unverified citations.

A workflow can save time and still fail the credibility test.

Research confidence does not come from how quickly a draft appears.

It comes from whether the workflow can answer two hard questions:

If the answer to either question is weak, the draft is weaker than it looks.

That is why academic confidence usually depends on control, not just convenience.

The first test has nothing to do with writing style.

It is about whether the workflow can move from topic to paper set in a disciplined way.

A reliable review workflow should help you:

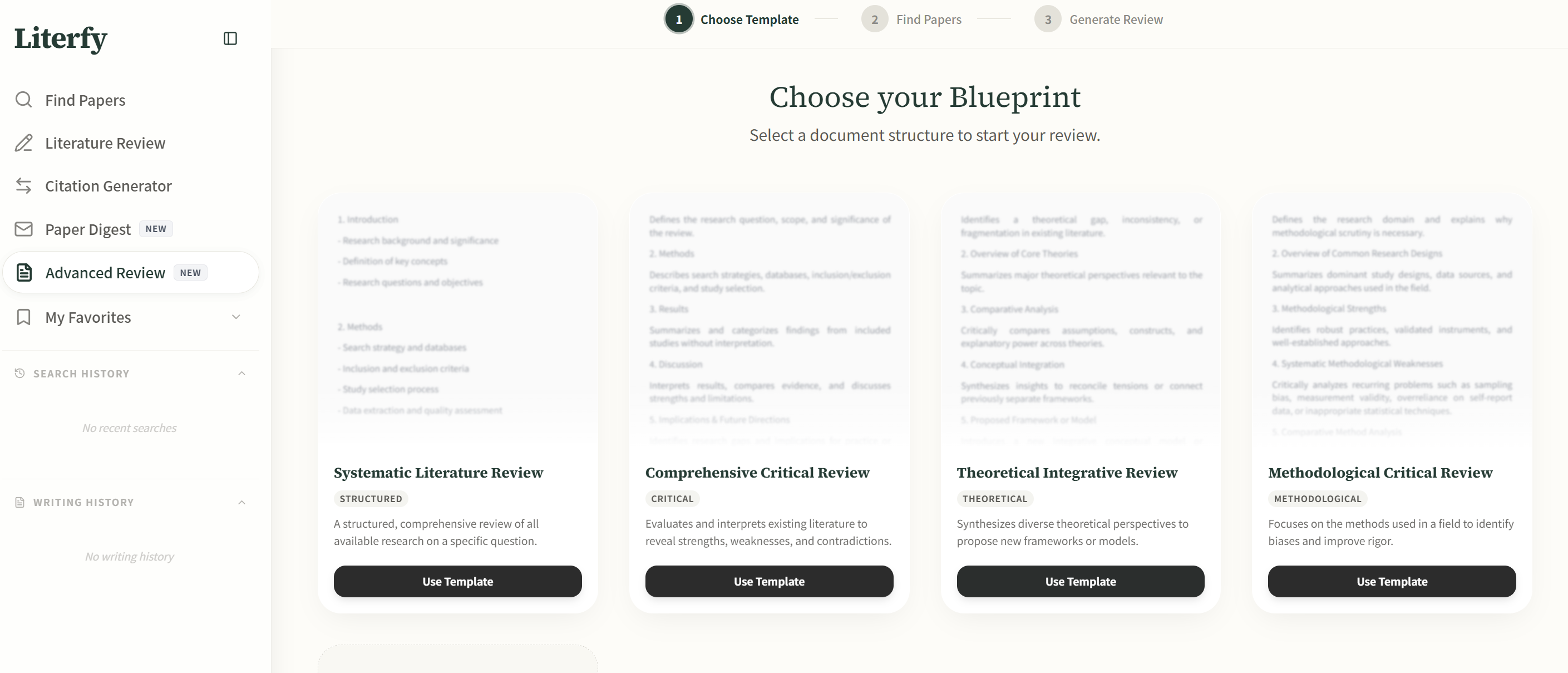

This is where Literfy fits naturally.

Its value is not just that it helps people generate review text. Its value is that it supports the paper-first side of the process: search, shortlist, organize, outline, and write from real papers instead of from generic AI confidence.

That distinction matters because a literature review is not just a writing task. It is a source-structure task first.

The second test starts where many researchers get careless.

Once the writing looks coherent, the citation layer often gets treated as if it has already been solved.

But references can fail in ways that are easy to miss:

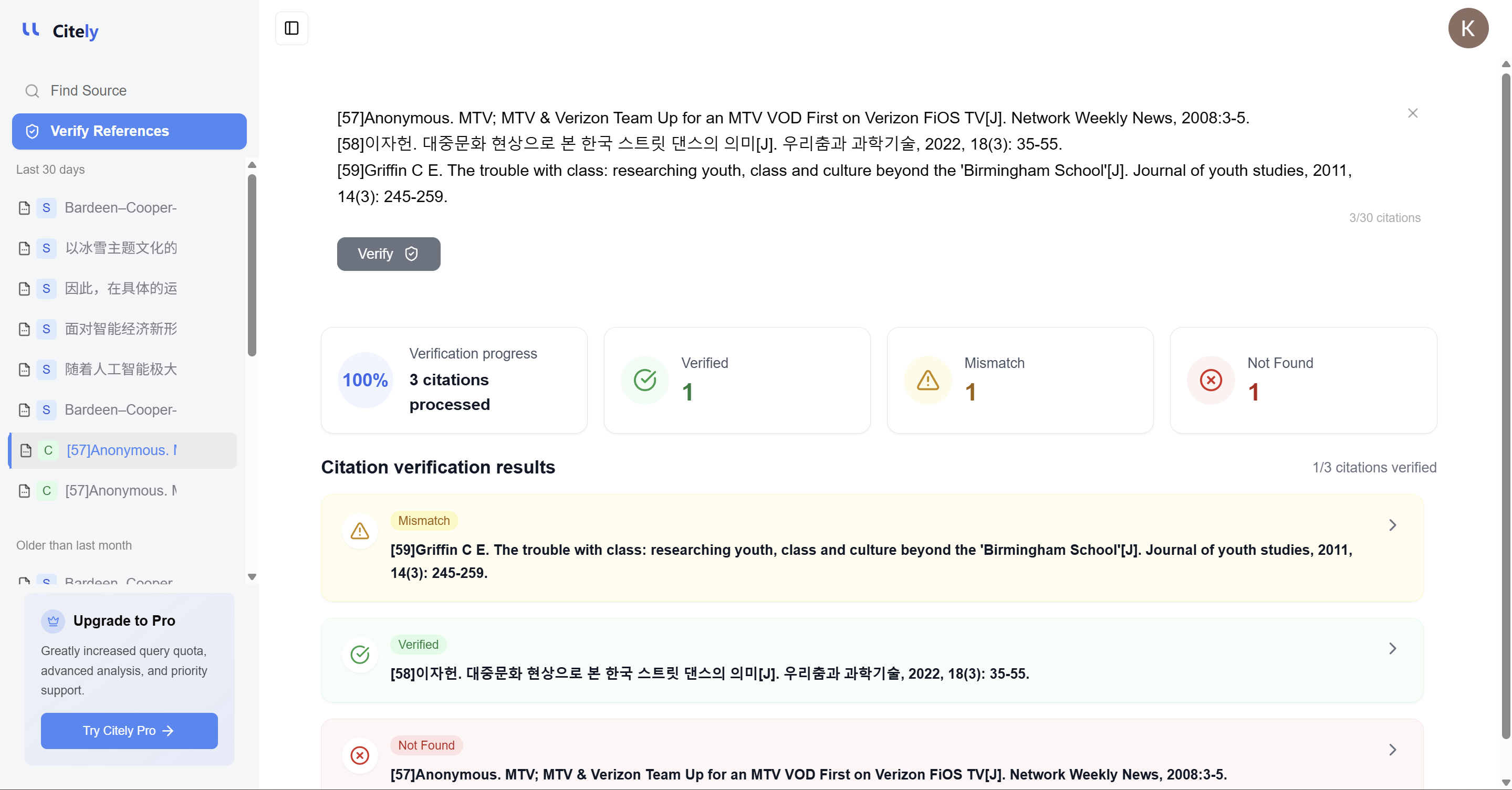

This is where Citely matters.

Its role is not to make a bibliography look prettier. Its role is to help researchers verify references, trace original sources, and decide whether a citation deserves to stay in the draft.

That is a very different job from drafting a review.

The weakest AI-assisted research workflows usually break in one of three places.

The workflow retrieves papers quickly, but not selectively.

You get a pile of results, but not a defensible review base. The draft sounds informed, yet the underlying literature coverage is too thin or too noisy.

An outline appears early, which feels productive.

But if the paper set is still unstable, the structure is only a guess wearing academic formatting.

This is the most dangerous failure.

A citation with authors, title, venue, and DOI looks finished. Researchers move on because the draft feels clean. But unless someone verifies that record properly, the draft may be carrying weak evidence behind polished language.

Instead of asking one tool to make the entire research process feel seamless, it is more useful to ask whether each part of the workflow has the right kind of control.

That usually means separating two layers.

This layer is about:

This layer is about:

The workflow becomes stronger when these two layers support each other without pretending to be the same task.

In low-stakes writing, speed can be enough.

In academic work, it is not.

You may be writing:

In all of those cases, the cost of false confidence is high.

A weak paper set produces weak synthesis.

An unverified citation layer weakens the argument even when the prose sounds strong.

That is why the best AI-assisted workflow is rarely the one with the fewest visible steps. It is the one with the clearest checkpoints.

Research speed is useful.

Research confidence is harder.

If you want both, the answer is not to flatten the whole workflow into one AI interaction. The better answer is to use the right tools for the right control points.

Use a paper-first workflow to build the review from real sources.

Use a verification workflow to make sure the citation layer is actually trustworthy.

That is how speed becomes useful instead of dangerous.

Related Articles

Continue exploring topics you care about.

One AI Tool Should Not Handle Your Entire Research Workflow

Many researchers now expect a single AI tool to search papers, summarize the field, generate a literature review, suggest citations, and verify references. That expectation is convenient, but it usual

Read MoreGoogle Scholar vs Regular Google for Research: When to Use Each (2026 Guide)

For academic researchers navigating the vast digital landscape of information in 2026, understanding the fundamental differences between Google Scholar and regular Google is paramount for efficient and effective literature discovery. While both platforms offer

Read MoreThe Citation Is Not the Source

Many researchers think they have verified a source when they have only verified a citation string. That is a serious mistake. A citation can look complete, consistent, and academic while still pointing to the wrong paper, a blended record, or no orig

Read MoreA Citation You Cannot Trace Is Not Evidence

A citation can be formatted correctly and still fail the most important test: traceability. If a reader cannot follow it back to a real, original source, then it is not functioning as evidence. In AI-

Read MoreHow to Find Primary Sources for Your Research Paper Using AI (2026)

How to Find Primary Sources for Your Research Paper Using AI (2026)

Read MoreThe Most Dangerous Research Draft Is the One That Looks Finished

The weakest academic draft is not always the one that sounds messy. Often, it is the one that sounds polished before the evidence layer has been properly built and checked. In AI-assisted research workflows, two failures often get hidden by fluency:

Read More