The Most Dangerous Citation Error Is the One That Looks Real

The worst citation errors are not the obvious ones. They are the references that look complete, sound academic, and pass a quick glance, but still point to the wrong source, a blended source, or no real source at all. In AI-assisted research workflow

The most dangerous citation error is not a missing comma or the wrong style format.

It is a citation that looks real enough to be trusted when it should not be.

That is the kind of error that survives.

Researchers usually catch the obvious mistakes. A broken title, a missing year, a visibly incomplete reference. Those are annoying, but they are also easier to fix because they announce themselves.

The harder problem is the citation that appears credible.

It has author names. It has a title. It has a journal. It may even have a DOI. On the surface, it looks like a normal academic reference. But underneath, something is off. The details do not belong together. The DOI resolves to another paper. The title has been distorted. Or the source cannot be traced back cleanly at all.

That is the error worth worrying about.

The answer is simple: it moves through the workflow without resistance.

Once a citation looks polished, people stop questioning it. It gets copied into notes, saved into a reference manager, inserted into a manuscript, and eventually formatted into a bibliography. At every step, it gains a little more authority.

By the time someone checks it closely, the problem is no longer local. It has already spread.

That is why this kind of citation error is more expensive than a visibly bad one. It wastes time later, weakens credibility more quietly, and is much more likely to survive into final output.

They usually come from four places.

AI-generated references

This is the most obvious source now.

AI tools are very good at producing references that look plausible. That is exactly what makes them risky. The citation can feel complete even when the underlying source is wrong, blended, or invented.

Secondary copying

A citation gets copied from a blog, a discussion page, another bibliography, or a summary tool instead of from the original source record.

Once that happens, errors travel easily.

Metadata mismatch

Sometimes the source exists, but the title, authors, year, venue, and DOI do not actually belong to the same paper.

This is one of the hardest errors to notice because each piece may look individually reasonable.

Workflow haste

The citation is saved before it is verified.

That usually happens when the workflow is optimized for writing speed, not source confidence.

This is the part many people still get wrong.

Formatting can improve consistency, but it does not improve truth.

A fake or mismatched citation can still be formatted perfectly in APA, MLA, Chicago, or any other style. It can look polished and still fail the one test that matters most: can it be traced back to a real, original source?

That is why citation formatting and citation verification should never be treated as the same task.

One is presentation.

The other is evidence control.

Instead of asking, "Does this citation look correct?", a stronger workflow asks:

That is a much better standard.

It shifts the workflow away from surface confidence and toward source-backed confidence.

And in academic work, that distinction matters a lot.

The good news is that these errors are catchable if you check them at the right point in the workflow.

The right sequence is:

First, search the title in real academic sources

Do not begin with formatting. Begin with retrieval.

Check the title across real academic databases and source records.

Second, compare the full metadata

Do the authors match? Does the year match? Does the DOI resolve to the same paper? Does the venue line up with the actual record?

Third, trace the source back to the original

Do not stop at another place where the citation is repeated. Stop only when you reach a reliable original record.

Fourth, only then move it into the writing workflow

Once the source is verified, it can safely move into notes, libraries, and drafts.

That order makes the rest of the workflow much easier to trust.

The more researchers use AI to brainstorm, summarize, and draft, the more fragile the citation layer becomes.

That does not mean AI is useless. It means the verification step has to become stricter.

In older workflows, some citation problems came from manual sloppiness.

In newer workflows, many of them come from plausible automation.

That changes the risk profile. We are no longer only fixing messy references. We are increasingly dealing with references that look clean but are evidentially weak.

That is a more serious problem.

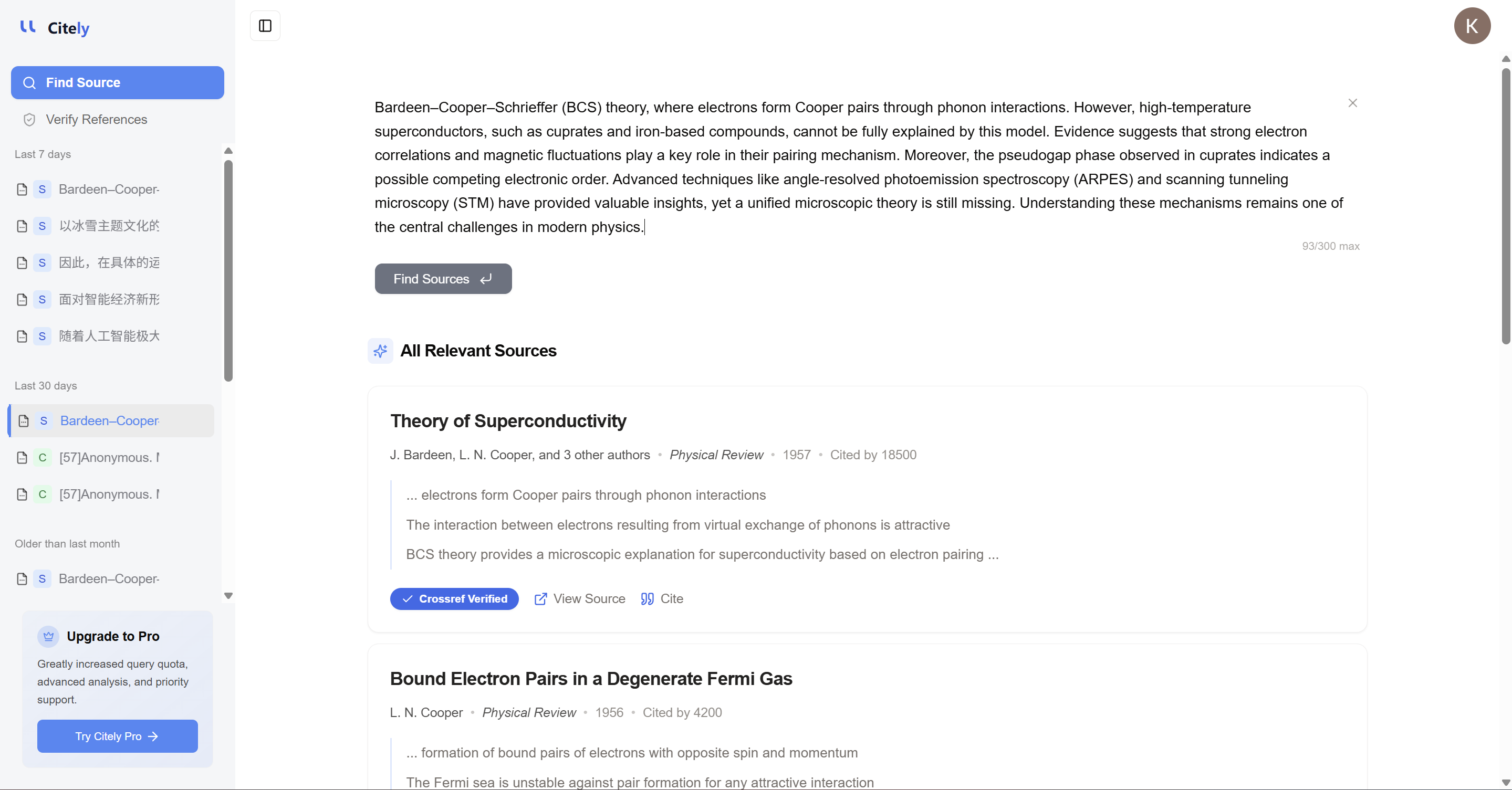

This is exactly why Citely matters.

The need is not just to manage references or clean up formatting. The deeper need is to check whether a citation is real, trace the original source, and catch the kind of reference error that looks trustworthy before it settles into the research workflow.

That is the difference between a citation tool that helps you organize sources and a workflow that helps you trust them.

The citation errors that do the most damage are not the ones that look broken.

They are the ones that look finished.

If a reference seems polished, that is not a reason to trust it more. It is a reason to verify it properly.

Because in research writing, the most dangerous citation is often the one that looks real enough to escape inspection.

Related Articles

Continue exploring topics you care about.

The Most Dangerous Research Draft Is the One That Looks Finished

The weakest academic draft is not always the one that sounds messy. Often, it is the one that sounds polished before the evidence layer has been properly built and checked. In AI-assisted research workflows, two failures often get hidden by fluency:

Read MoreHow to Check If a Citation Is Real

A citation can look credible and still point to a paper that does not exist. This guide shows a practical step-by-step workflow to check whether a citation is real before you submit.

Read MoreHow to Check if an AI-Generated Citation Is Real (2026 Guide)

A step-by-step guide to verifying AI-generated citations in 2026. Learn how to detect fake references, check DOIs, and use automated tools like Citely to cross-reference 200M+ academic records in seconds.

Read MoreCitation Checker vs Citation Generator: What’s the Difference?

Citation generators create formatted references. Citation checkers verify whether references are real and accurate. Here is what each tool actually does and when to use them.

Read MoreCitation Finder From Text: How to Find the Original Source Behind a Paragraph

Learn how to find the original source behind a sentence, paragraph, or research claim using Google Scholar, Crossref, and AI-powered source finder workflows.

Read MoreHow to Check If Citations Are Real (2026 Guide)

A practical 2026 guide to checking whether citations are real, spotting fake references, and verifying papers against Google Scholar, Crossref, PubMed, and OpenAlex before submission.

Read More